Building Anti-Fraud Competency Without Losing Control of Your Data

Published on: 2026-03-28 01:04:05

Fraud teams face a practical choice. They can buy anti-fraud capability as a managed black box, or they can build core anti-fraud competency in-house and use external data sources where needed. Both paths can work, but they lead to very different outcomes over time.

The main risk in the buy-heavy model is not just vendor lock-in. It is data lock-in. Many anti-fraud vendors enrich raw events with device, identity, behavioral, network, and consortium signals. That enriched data becomes the most valuable asset in the stack because it improves detection, case review, rule tuning, and model performance. If that data stays inside the vendor environment, your team does not fully own the decision history that shaped approvals, declines, and investigations. Exporting it later is often incomplete, expensive, or impossible at the level of detail you need.

This is why anti-fraud competency should be treated as a long-term operational capability. You can buy data, signals, and specialist services. But your company should control the decision logic, the decision traces, and the enriched event history used to evaluate fraud risk.

If you are designing or rebuilding this capability, start with the customer journey. Fraud does not happen in one place. It appears at weak points across onboarding, login, profile changes, payments, withdrawals, loan applications, account recovery, and support interactions. The goal is to identify those weak points, define what normal behavior looks like, detect abnormal behavior early, and route every important event through deterministic rules that can be monitored, audited, and changed fast.

Build vs. buy anti-fraud capability

Most companies do not choose between building everything and buying everything. The practical question is what to own and what to source.

What buying gets you

- Fast access to fraud signals such as device intelligence, IP risk, email profiling, consortium data, and identity checks.

- Faster initial deployment if the vendor provides prebuilt rules, workflows, and risk scoring.

- Specialist coverage for attack patterns your team has not seen before.

- Operational support for teams with limited fraud expertise.

What buying often costs you

- Opaque logic if the vendor returns only a score or recommendation.

- Slow change cycles when your team needs vendor help to update rules or decision flows.

- Data lock-in when enriched fraud data is stored in the vendor platform and cannot be exported in usable form.

- Weak traceability if analysts cannot inspect the exact reasons behind a decision.

- High switching costs when core workflows depend on vendor-specific objects, APIs, and historical data structures.

The strongest operating model is usually this: buy external signals, own the decision layer. That means your company gathers raw and enriched events, stores decision traces, and evaluates risk using a flexible rules platform. Vendors remain replaceable. Your anti-fraud logic does not.

For a practical view of data sources that can feed this layer, see Data sources you can use and Integrating external data sources into Decisimo.

Why data ownership matters more than teams expect

Fraud programs improve through feedback loops. You collect event data, run rules, review outcomes, confirm fraud or false positives, and refine the decision logic. That loop only works well if you retain the underlying evidence.

The most valuable anti-fraud data is rarely the original application form or transaction request by itself. The real value sits in the enriched data attached to those events:

- Device fingerprint and device consistency over time

- IP address history, geolocation, ASN, proxy, VPN, and hosting signals

- Email age, domain quality, breach exposure, and deliverability traits

- Behavioral patterns such as typing speed, navigation path, and form completion timing

- Velocity patterns across accounts, identities, devices, and payment instruments

- Link analysis between applications, beneficiaries, addresses, and phone numbers

- Decision outcomes, analyst dispositions, and chargeback or fraud-confirmed feedback

If a vendor controls that data, your team cannot easily answer basic questions. Which signals mattered most in confirmed fraud? Which rules created false positives? Which attack pattern emerged in the last 30 days? What changed after a new onboarding flow went live?

Owning this event history lets you improve controls over time. It also matters for auditability. If you automate decisions that affect access, payments, lending, or onboarding, you need decision traces that show what data was evaluated, which rules fired, and why the final outcome was reached. For more on that, see Tracing Models and Decisions.

How to run anti-fraud as a disciplined operating function

Strong anti-fraud operations start with a map, not a model. First map the customer journey. Then define where fraud can enter, what normal behavior looks like, what abnormal behavior looks like, and what evidence you need to collect at each stage.

1. Map the customer journey as milestones

Break the journey into observable milestones. The exact list depends on your business model, but a typical flow includes:

- Session start

- Landing page or app open

- Registration started

- Email or phone submitted

- OTP sent and verified

- Identity document uploaded

- Face match completed

- Address entered

- Bank account or card linked

- Application submitted

- Manual review triggered

- Approval or decline

- First transaction, disbursement, or withdrawal

- Login from new device

- Password reset or account recovery

- Profile change, beneficiary change, or payout destination change

Each milestone should produce a structured event. That event should include customer identifiers, timestamp, device data, network data, session data, request metadata, payload attributes, and the result of any external checks.

2. Define normal and abnormal behavior

You cannot detect abnormal behavior unless you first define normal behavior in a measurable way. Normal behavior is not a vague idea. It is a set of observed ranges and patterns tied to the journey stage.

Examples:

- A new applicant usually takes a certain amount of time to move from registration to OTP verification.

- Most legitimate users complete form fields in a human pattern, not in a sub-second automated burst.

- A returning customer usually logs in from a familiar device, geography, and time pattern.

- Bank account changes and payout requests usually do not happen seconds apart.

Abnormal behavior may include:

- Very short timing between milestones, suggesting automation or scripted attacks

- Repeated applications from one device with small identity changes

- High mismatch between claimed identity, device profile, and network profile

- Many accounts linked to one phone, card, IP range, or address pattern

- Sudden change in behavior after account takeover signals

- Document, selfie, email, and device data that do not fit together

For lending-specific examples, see Prevent identity and synthetic fraud in consumer lending and Anti-fraud decision logic for consumer lending / BNPL.

3. Collect full technical details, not just business outcomes

Many teams log that a user was approved, declined, or referred. That is not enough. To improve anti-fraud controls, you need the technical details behind each event.

At minimum, collect:

- Timestamps for every milestone

- Time deltas between milestones

- Device identifiers, device fingerprint, browser, OS, app version, screen traits

- Network signals such as IP, ASN, proxy or VPN indicators, geolocation, and connection type

- Identity attributes submitted by the user and any verified variants

- Behavioral signals where available, including interaction speed and event sequence

- External data responses in full, not only the final score

- Rule outcomes, including which rules fired and their input values

- Analyst decisions and final fraud labels

This is where many programs fail. They collect only vendor scores and final statuses. That removes the detail needed to tune rules, compare providers, or reconstruct decisions later.

Use timing as a fraud signal

Timing between stages is often one of the most underused fraud indicators. It is also one of the easiest to collect if your event design is sound.

Examples of useful timing signals include:

- Time from session start to registration

- Time from registration to OTP verification

- Time from form start to form submit

- Time between ID upload and selfie completion

- Time from account creation to first payment attempt

- Time from password reset to payout destination change

- Time between repeated failed attempts from the same device or IP

Fraud attacks often leave timing signatures. Bots are fast and consistent. Fraud farms can be fast in some stages and delayed in others. Account takeovers often show abrupt transitions from login or reset to risky account changes. Mule activity may cluster actions within short windows across linked accounts.

These patterns should feed deterministic rules and decision tables, not sit unused in logs.

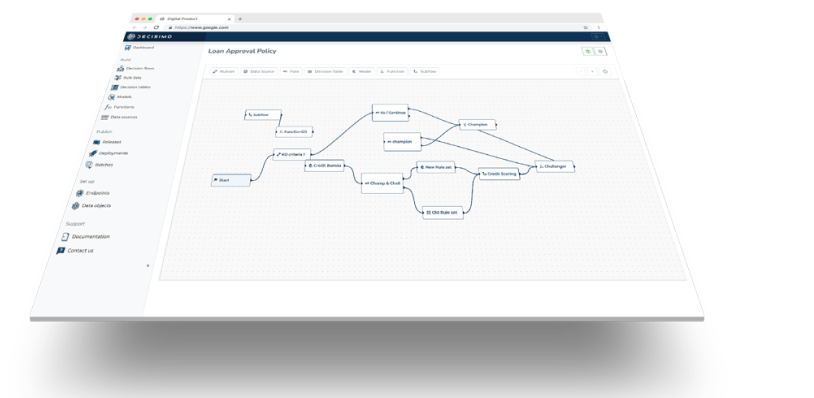

Evaluate each stage with a decision engine

Once events are structured, the next step is to evaluate each milestone with flexible decision logic. This is where a decision engine or business rules platform becomes central.

Instead of relying on one static fraud score, evaluate risk at each stage using explicit rulesets. For example:

- At registration, screen for disposable email patterns, device anomalies, and abnormal velocity.

- At onboarding, combine identity checks, document checks, device intelligence, and timing rules.

- At transaction or disbursement, evaluate account age, behavior since onboarding, payout changes, beneficiary risk, and linked-entity patterns.

- At login or recovery, compare current signals with known-good historical patterns.

This approach gives teams several advantages:

- Stage-specific controls instead of one blunt score

- Fast rule changes when new attack patterns appear

- Clear traceability for every rule fired

- Better tuning because analysts can inspect false positives and misses

- Vendor flexibility because external signals can be swapped without rewriting the full operating model

If you want a simple starting point, see Working with Basic Rules. If your fraud program uses weighted attributes, scorecards can also help. See Implementing scorecards in rule engines.

A practical operating model for anti-fraud

A workable anti-fraud operating model usually includes these components:

Event collection layer

Capture customer journey milestones, technical metadata, and third-party responses in a consistent event schema.

Enrichment layer

Call external services for device, identity, email, IP, company, document, or behavioral checks. Keep the raw response and the mapped attributes you use in decision logic.

Decision layer

Run deterministic rules, decision trees, scorecards, and routing logic. Produce outcomes such as approve, step-up, review, challenge, limit, or block.

Case review and feedback

Store analyst decisions, confirmed fraud outcomes, and false-positive feedback. Feed those results back into rule tuning.

Monitoring layer

Track attack rates, conversion impact, false positives, manual review volume, approval rates by segment, and rule hit patterns over time.

This structure helps companies build anti-fraud competency as a repeatable function instead of a collection of disconnected tools.

What good looks like in practice

A mature anti-fraud setup does not depend on mystery scores. It has clear event milestones, complete technical logging, reusable decision logic, and full decision traces. It uses external data where useful, but it does not give away ownership of the logic or the enriched history.

That matters for cost, speed, and control. When your team owns the decision layer, you can adapt faster to new fraud patterns, compare vendors on evidence instead of promises, and keep improving performance without rebuilding your stack every year.

Anti-fraud competency is built through data ownership, traceability, and flexible decision workflows. Buy signals where needed. But keep the core under your control.