Attribute and Model Management: How to Track Stability Without Weakening Your Decision Strategy

Published on: 2026-03-28 21:51:55

In credit, fraud, insurance, and onboarding, teams often assume that more data leads to better models. Sometimes it does. Often it does not. A predictor can look useful in development, then fail in production because its distribution changes, a category is too small, or the data source behaves differently across segments, channels, or time periods.

This is where attribute management and model management matter. Stable models depend on stable attributes. If you do not monitor how predictors are defined, grouped, and used inside decision logic, you can introduce volatility into production decisions without noticing it early enough.

The problem is not limited to data science. Attribute instability travels downstream into policy rules, score thresholds, manual review queues, pricing, fraud controls, and compliance reporting. A weak predictor rarely stays isolated. It affects the entire decision flow.

For teams building deterministic, auditable decision logic, that means stability is not a nice-to-have. It is an operating requirement.

Why adding more attributes can make a model worse

It is tempting to include every available signal in a model. New vendor data, behavioral features, identity checks, device intelligence, transaction patterns, and derived variables can all look predictive during development. But predictive power in a training sample is not the same as operational value in production.

An attribute can hurt model quality for several reasons:

- It has weak representation. Some values or categories occur too rarely to support stable estimation.

- It is sensitive to changes in acquisition mix. A channel shift can alter the predictor distribution fast.

- It depends on external data quality. Third-party inputs may drift, fail, or change meaning.

- It introduces volatility at segment level. A feature may perform well overall but break in key sub-populations.

- It is hard to explain and govern. That creates friction for auditability, validation, and threshold management.

In practice, a model with fewer well-managed predictors often performs better over time than a model packed with unstable variables. This is one reason scorecards and grouped predictors remain common in regulated environments. They trade a small amount of short-term lift for better control, easier monitoring, and more stable decision logic.

What attribute management actually means

Attribute management is the discipline of defining, documenting, testing, monitoring, and governing the predictors used in models and decision workflows. It covers more than a feature list in a notebook.

Good attribute management should answer five questions:

- Definition: What exactly does the attribute measure?

- Source: Where does the value come from, and how is it computed?

- Transformation: Is it raw, binned, capped, normalized, or combined with other fields?

- Coverage: How often is it present, and for which populations?

- Stability: Does its predictive behavior remain consistent over time?

Without this structure, teams end up with hidden dependencies. A predictor gets reused across scorecards, fraud checks, and pricing logic, but nobody notices when its distribution shifts. By the time the issue appears in approval rates or loss performance, the root cause is already buried across several decision flows.

If your decision logic combines rules, scorecards, and models, the need for tracing becomes even stronger. For a practical view on how to keep logic explainable in production, see Tracing Models and Decisions.

Why binning and categorizing predictors improves stability

Binning is often treated as old-fashioned compared with raw continuous variables and automated feature engineering. That view misses an important point. Binning is not just a modeling convenience. It is a control mechanism.

When you bin or categorize a predictor, you reduce sensitivity to noise and make the relationship between the predictor and the outcome easier to observe, validate, and monitor. Instead of reacting to every small fluctuation in a raw value, the model evaluates a controlled set of ranges or groups.

This helps in several ways:

- It smooths noise. Small numerical changes do not trigger unstable model responses.

- It improves interpretability. Teams can inspect how each bin behaves and explain its impact.

- It supports monotonic relationships. This is useful when risk should move in a consistent direction.

- It reduces overfitting. The model is less likely to latch onto local patterns that do not persist.

- It makes monitoring easier. Bin-level population and performance changes are easier to track than thousands of raw values.

For example, age as a raw variable may show local irregularities caused by sample composition. Grouping age into meaningful bands can produce a more stable signal. The same applies to income, tenure, transaction count, or time-since-event measures. In fraud settings, sparse device or email patterns may also need grouping before they are safe to use in production decision logic.

If you are working with scorecards or grouped variables inside a rules-based environment, Implementing scorecards in rule engines provides a related foundation.

The risk of weak categories and thin bins

Binning helps stability, but only when the bins themselves are statistically and operationally sound. A category with very low volume can create a false sense of predictive power. In development, it may appear to separate good and bad outcomes sharply. In production, the same category may swing wildly because it never had enough representative power to begin with.

This is one of the most common stability problems in model management. Thin bins lead to unstable estimates. Unstable estimates lead to unstable scores. Unstable scores then affect cutoffs, routing logic, and downstream decisions.

Typical warning signs include:

- Very small sample counts in one or more bins.

- Large performance swings between development, validation, and recent production windows.

- Non-intuitive rank ordering that changes across time periods.

- Sharp movement in bin distributions after channel, policy, or vendor changes.

- Strong performance in aggregate but weak or contradictory behavior in key segments.

When a category does not have enough representative power, it should not automatically stay in the model just because it improves a development metric. Sometimes the right answer is to merge categories, re-bin the predictor, cap extreme values, or remove the attribute entirely.

That decision is not anti-data science. It is good model governance.

Model stability is a decision strategy issue, not only a model issue

Many teams treat model monitoring as a separate discipline from policy management. In production, that separation rarely holds.

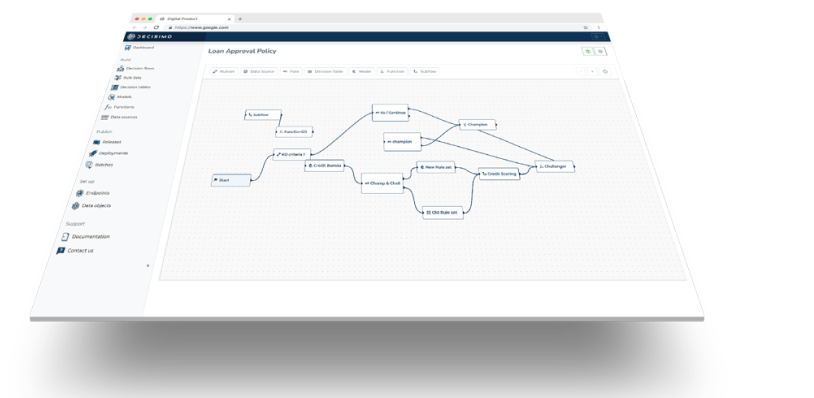

A model score is usually only one part of a larger decision flow. It feeds approval policies, risk-based pricing, fraud escalation, manual review triggers, exposure controls, and customer treatment rules. If the model becomes unstable, the wider decision strategy becomes unstable too.

That is why model management should sit close to decision logic management. Teams need to track not only whether a model performs well, but whether it continues to behave as expected inside the operating policy.

For lending teams, this becomes especially important as scale increases and policy layers multiply. See Decision Strategy in Scaled Lending: How to Manage Decision Logic Without Destabilizing Growth for a related view on keeping changes controlled.

What to monitor when tracking attribute and model stability

Stability monitoring should happen at both attribute level and model level. Monitoring only top-line model metrics is too late. By then, drift may already be affecting production outcomes.

Attribute-level monitoring

- Population distribution by bin or category across time windows

- Missing rate and default or fallback value usage

- Coverage by channel, product, geography, or customer segment

- Outcome rate within each bin to check rank ordering and consistency

- Data source freshness and failure rates for external attributes

Model-level monitoring

- Score distribution drift

- Approval or decline rate movement

- Population Stability Index or equivalent drift measures

- Bad rate or fraud rate by score band

- Calibration changes between predicted and observed outcomes

- Segment-level performance, not just portfolio averages

The key is to connect these views. If score drift appears, you should be able to trace which attributes moved, which bins changed, and which decision thresholds are now exposed. That traceability makes remediation faster and makes audits simpler.

For teams refining underwriting controls, Metrics to Monitor in Lending and Credit Underwriting is a useful companion piece.

Practical rules for stable predictor design

There is no single template for every portfolio, but several rules hold across most use cases.

- Prefer predictors with clear business meaning. If nobody can explain why a variable should predict risk, it is harder to govern and defend.

- Group continuous variables thoughtfully. Bins should reflect enough volume, stable behavior, and sensible ordering.

- Avoid categories with weak representation. Merge them where possible or remove them.

- Test stability across time and segments. A predictor that works only in one campaign or one quarter is fragile.

- Document every transformation. Raw field, derived field, binning logic, and fallback handling should be traceable.

- Monitor external dependencies. If a vendor changes payload quality or response patterns, your model may shift before headline metrics catch up.

- Align model changes with policy changes. Threshold updates without attribute review can produce unintended portfolio effects.

This approach fits well with deterministic decision workflows, where logic needs to remain observable and versioned. Even if you use machine learning, the surrounding decision logic should make it clear how model outputs are evaluated and acted on.

When to remove an attribute from a model

Teams are often reluctant to remove attributes once they have been engineered, tested, and approved. But a predictor should earn its place continuously, not once.

Consider removing or redesigning an attribute when:

- Its distribution drifts repeatedly without a stable business explanation.

- Its bins show weak volume or unstable event rates.

- Its contribution is small, but its governance overhead is high.

- It depends on an external source with inconsistent uptime or quality.

- It creates explainability problems in regulated decisions.

- It adds complexity to decision logic with little operational benefit.

In many cases, a simpler attribute set leads to stronger long-term performance. The goal is not to maximize the number of predictors. The goal is to build decision logic that keeps working under real operating conditions.

Build for control, not just for lift

Good model management is not about chasing the highest development score. It is about keeping production decisions stable, explainable, and aligned with business policy.

That starts with attribute management. If you throw weakly represented attributes into a model, the instability will not stay inside the model. It will spread into your decision strategy. Binning and categorizing predictors helps reduce that risk because it forces structure, improves interpretability, and makes drift easier to detect before it becomes expensive.

For teams running automated decisions at scale, this is the practical standard: use attributes you can define, trace, monitor, and defend. Group predictors in ways that preserve representative power. Remove features that add noise. Then connect model monitoring to the wider decision flow, so performance issues do not turn into policy failures.

That is how stable models support stable business decisions.