How to implement an automated decision strategy that keeps working under failure

Published on: 2026-03-28 21:57:12

Most teams start automation by mapping the ideal decision flow. Inputs arrive, external data sources respond, rules evaluate, and the platform returns a result. That is useful, but incomplete.

Production decision logic fails at the edges. A bureau may time out. A fraud vendor may return partial payloads. A model service may be unavailable. A key field may be empty, malformed, or delayed. If your automated decision strategy does not define what happens next, you do not have a strategy. You have a dependency chain.

A resilient automated decision strategy does 2 things at once. It makes accurate decisions when everything works, and it makes controlled decisions when parts of the system do not. That means explicit failover paths, explicit missing data handling, and full traceability for every outcome.

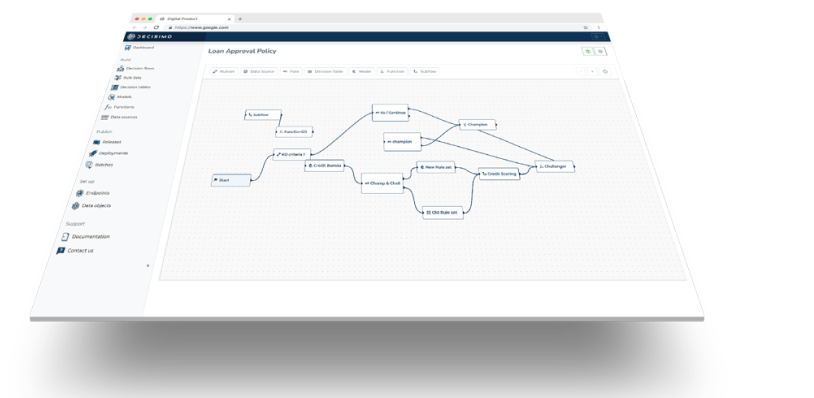

If your team is still defining ownership and control of decisioning, this article on who makes decisions is a useful starting point. If you need the operational view of rollout, this step-by-step guide to automating the loan approval process shows how teams move from manual reviews to production decision workflows.

What an automated decision strategy actually includes

An automated decision strategy is more than a ruleset. It is the full structure that defines how a decision is evaluated, what data is required, what fallback logic applies, and what result is returned under normal and abnormal conditions.

In practice, that usually includes:

- Decision intent: what the decision is for, such as approve, decline, refer, price, route, or trigger review.

- Input contract: required and optional data fields, valid ranges, formats, and source systems.

- Decision logic: decision trees, decision tables, scorecards, policy rules, and API orchestrations.

- External dependencies: fraud checks, credit data, KYC services, identity verification, pricing services, or internal APIs.

- Failover logic: what happens if a service, model, or workflow component fails.

- Missing data logic: what happens if fields are absent, stale, contradictory, or incomplete.

- Auditability: logs, rule versioning, decision traces, and reason codes.

The important point is simple. Failure handling is not an operational note outside the strategy. It is part of the decision logic itself.

Start with decision classes, not one giant fallback

A common mistake is to define one generic fallback rule for every failure. For example: if anything breaks, send the case to manual review. That sounds safe, but it creates cost, delays, and inconsistent outcomes.

A better approach is to classify decisions by business risk and then define failover behavior for each class.

1. Low-risk decisions

These are decisions where a conservative automated fallback is acceptable. For example, routing a customer to a standard onboarding path, applying a default segmentation rule, or setting a non-critical workflow status.

For low-risk decisions, the failover strategy may be simple: use a minimum hardcoded strategy with limited paths and clear thresholds.

2. Medium-risk decisions

These decisions affect revenue, customer experience, or operations, but do not create immediate regulatory exposure if handled conservatively. Examples include pre-qualification, document routing, or limit recommendations before final approval.

Here, the fallback may allow partial automation, but only under narrower policy rules.

3. High-risk decisions

These include underwriting, fraud decisions, sanctions screening, pricing, and any decision with compliance or financial impact. For these, failover must be tightly controlled. The fallback may be a restrictive ruleset, a refer decision, or a temporary stop on automated approvals.

This classification helps you avoid 2 bad outcomes. One is unsafe automation during outages. The other is unnecessary manual review for decisions that could still be handled deterministically.

Design the minimum hardcoded strategy first

When a complex decision system fails, you need a smaller, stable layer of decision logic that can still run. Think of it as a minimum viable policy. It should be explicit, testable, and intentionally narrow.

This minimum hardcoded strategy should not try to replicate the full decision flow. Its job is to preserve safe operation until normal decision workflows recover.

What the minimum fallback should do

- Use a small number of trusted inputs that are usually available.

- Return only a limited set of outcomes, such as approve low-risk cases, decline clearly ineligible cases, or refer everything else.

- Avoid dependencies on non-essential external services.

- Use fixed thresholds and simple rules that can be audited fast.

- Produce explicit reason codes that show the fallback path was used.

Example: lending failover logic

Assume your normal underwriting flow uses bureau data, internal history, fraud signals, affordability calculations, and a pricing model. If that flow is unavailable, your fallback should not try to simulate all of that with weak substitutes.

Instead, you might define a minimum hardcoded strategy like this:

- Decline if applicant age is below policy threshold.

- Decline if required identity verification status is failed.

- Decline if internal negative history flag is true.

- Approve only if loan amount is below a low exposure cap, income is present and above a fixed minimum, and product type is within a restricted set.

- Refer all other applications.

This is conservative by design. It limits exposure, keeps decisions deterministic, and maintains continuity.

For teams working in lending, this article on typical underwriting policy and this guide to replacing manual underwriting with automated decision logic provide useful examples of how policy rules can be broken into manageable layers.

Separate technical failure from policy fallback

Another common mistake is mixing system errors with business outcomes. A timeout from a third-party service is not the same as a fraud fail. A missing field is not the same as a negative eligibility result.

Your decision logic should keep these states separate.

- Business result: approve, decline, refer, route, price, or review.

- Technical state: success, timeout, partial response, invalid payload, dependency unavailable, or ruleset exception.

- Fallback state: normal path, degraded path, emergency minimum path.

This matters for auditability, operations, and customer support. If an application was referred because a provider timed out, that should be visible in the decision trace. If it was declined by policy, that should also be clear. Blurring those causes makes control weaker, not stronger.

This article on tracing models and decisions goes deeper into how to maintain decision traces across rules, models, and external calls.

Build an explicit missing data strategy

Missing data is not one problem. It is several different problems that need different responses.

In production systems, data may be:

- Absent

- Null

- Empty but technically present

- Out of date

- Malformed

- Inconsistent across sources

- Delayed from an upstream system

- Unavailable because a provider failed

If all of these map to the same rule, you will get poor decisions and poor diagnostics.

Define data criticality

Start by classifying fields and attributes into 3 groups:

- Mandatory for any decision: if missing, the case cannot be decided automatically.

- Mandatory for specific paths: if missing, only some decision branches are blocked.

- Optional but informative: if missing, the decision can still proceed with adjusted logic.

For example, customer ID, product type, and requested amount may be mandatory for any flow. Verified income may be mandatory only for higher exposure paths. Device intelligence may be optional but useful for fraud detection.

Define behavior per missing data type

Once data is classified, define how the strategy responds.

- Mandatory missing: stop the path and return refer, reject the request as invalid, or request more data.

- Optional missing: continue with reduced confidence logic or conservative thresholds.

- Stale data: continue only if age is within a defined tolerance, otherwise refresh or refer.

- Malformed data: treat as invalid input, not as a negative policy signal.

- Contradictory data: trigger consistency checks and route to review if thresholds are exceeded.

This makes the decision logic predictable. It also gives product, risk, and engineering teams a shared language for implementation.

Do not silently replace missing data with defaults

Default values can hide risk. If income is missing and your system silently sets it to zero, that may create unintended declines. If fraud score is missing and the system sets it to a neutral value, that may create false approvals.

Defaults are acceptable only when they are explicit policy choices with known impact. They must appear in the decision trace and reason codes.

Use layered decision logic for graceful degradation

The most resilient automated decision strategies are layered. They do not depend on one large workflow that either works fully or fails fully.

A practical layered structure looks like this:

Layer 1: Input validation

Check schema, required fields, formatting, and basic consistency before any business rules run.

Layer 2: Core eligibility rules

Run the minimum policy checks that do not depend on fragile integrations.

Layer 3: Enrichment and external data

Call providers, internal APIs, fraud services, and models. Capture success, failure, freshness, and payload quality for each source.

Layer 4: Full policy evaluation

Apply richer decision logic when the required data is present and valid.

Layer 5: Fallback evaluation

If enrichment fails or required attributes are missing, route to the correct degraded policy. Do not restart the whole flow. Evaluate the case against the relevant fallback ruleset.

This layered approach supports controlled degradation. It also makes testing simpler because each failure mode can be validated at the right layer.

If you are working with external providers, this guide to integrating external data sources and this overview of data sources you can use are useful references when designing dependency-aware decision workflows.

Define reason codes for every fallback path

Reason codes should not only explain policy outcomes. They should also explain degraded outcomes.

Examples include:

- External credit data timeout. Minimum policy applied.

- Fraud provider unavailable. Referred under degraded risk policy.

- Mandatory income attribute missing. Application sent for review.

- Identity payload invalid. Request rejected due to input quality.

- Model service unavailable. Rules-only fallback path used.

These codes support 4 things:

- Operational monitoring

- Auditability

- Customer support

- Post-incident analysis

Without clear reason codes, teams can see volume changes but not the cause behind them.

Test failure modes as first-class scenarios

Many teams test policy changes carefully and failure behavior poorly. That is backwards. Outages and missing data are not edge cases. They are part of production reality.

Your test plan should include:

- Provider timeout

- Partial provider response

- Null or empty mandatory field

- Stale cached response

- Malformed payload from upstream system

- Model endpoint unavailable

- Fallback thresholds triggered correctly

- Reason codes written correctly

- Decision traces showing normal path versus degraded path

For each scenario, verify 3 things. First, the returned decision is correct. Second, the path taken is traceable. Third, the operational signal is visible in monitoring.

Monitor degraded-path volume like a risk metric

If fallback paths activate often, that is not just a technical issue. It is a decision quality issue.

You should monitor:

- Rate of decisions using degraded paths

- Volume by failure cause

- Approval, decline, and refer rates under fallback logic

- Conversion and loss performance for fallback-approved cases

- Manual review load created by missing data

- Recovery time after dependency failure

This helps you answer practical questions. Is a provider outage pushing too many cases into review? Is the minimum hardcoded strategy too restrictive? Are missing fields coming from one integration or one channel?

For lending teams, this article on metrics to monitor is a useful reference for connecting decision performance to operational and risk outcomes.

Implementation principles that keep the strategy maintainable

To keep the decision logic usable over time, follow a few rules.

- Keep fallback rulesets separate from primary rulesets, but linked by explicit routing logic.

- Version everything, including emergency rules and temporary thresholds.

- Document dependency assumptions for each decision flow.

- Limit fallback scope so degraded logic stays understandable.

- Review fallback outcomes regularly with risk, engineering, and operations.

- Do not bury technical exceptions in application code where policy teams cannot trace them.

If business users manage policy, they still need visibility into fallback behavior. If engineering owns integrations, they still need to understand policy impact. A decision platform should give both groups the same decision trace.

Final thought

A strong automated decision strategy does not assume perfect inputs or perfect uptime. It defines what to do when systems fail, data goes missing, or dependencies return incomplete results.

That is what makes decision logic production-ready. Not complexity. Not the number of providers connected. Clear policy under normal conditions, clear policy under degraded conditions, and full auditability across both.

If you build failover and missing data handling into the decision flow from the start, your platform keeps making deterministic, explainable decisions when it matters most.