Deployment of Risk Management Decision Engines in Consumer Lending

Published on: 2026-04-06 11:00:37

Deployment of Risk Management Decision Engines in Consumer Lending

Consumer lending moves fast. Loan volumes change, fraud patterns shift, and credit performance can drift before teams notice. A decision engine gives lenders a way to evaluate applications with explicit decision logic, consistent rules, and full traceability.

The point is not to replace risk teams. The point is to give them a system that applies policy in a repeatable way, records every outcome, and can change when the data changes. That matters most in consumer lending, where a small rule change can affect approval rates, losses, and operational cost.

Start with rule prioritization

Rule order matters. A decision engine should evaluate the cheapest and most certain checks first, then move to more expensive data sources only when needed. That keeps decisioning efficient and avoids paying for data you do not need.

In practice, internal rules should come before external checks. Internal policy rules can reject an application early if it clearly fails eligibility. Only applications that pass those checks should move to outside data sources, bureau checks, or third-party risk signals.

Use hard rejects for absolute risk

Some applicants are simply not fit for the portfolio. These are the cases often called KO criteria, hard rejects, or credit lending eligibility rules. If a rule is triggered, the engine should stop the flow and reject the case immediately.

This is useful for obvious disqualifiers such as age limits, policy exclusions, or country restrictions. It saves cost, reduces manual work, and keeps the lending policy clear. It also protects the portfolio by removing cases that should never move forward.

A good hard reject rule is narrow, explicit, and auditable. If a risk team cannot explain why the rule exists, it should not be in the decision flow.

Build flexible rules, not static logic

Risk policy changes. Product terms change. Fraud patterns change. A decision engine should let teams update rules without rewriting the full process each time.

That means the platform should support rule activation, deactivation, versioning, and testing. A team should be able to switch a rule on or off, compare its effect, and roll back if performance drops. This is basic control, not a nice-to-have.

Flexible decision logic is especially important when the lender adds new products or enters new markets. A policy that works for one segment may be too strict or too loose for another. The engine should let teams adjust thresholds, routing, and outcomes with minimal disruption.

Keep policy changes measurable

Every rule update should have a clear reason and a measurable effect. If a score threshold changes, teams should know how approvals, losses, and manual reviews moved after the change. Without that feedback loop, rule changes become guesswork.

This is where structured decision traces matter. They let teams compare versions, identify which rules fired, and see how the engine behaved under real traffic. That is the difference between controlled iteration and blind tuning.

Keep detailed records of every decision

Traceability is not optional in lending. The engine should record every input, every rule fired, every intermediate outcome, and the final decision. If a case is approved, declined, routed to manual review, or priced differently, the system should show why.

These records serve three jobs. First, they support internal audit and compliance review. Second, they help risk teams debug policy and model behavior. Third, they create the historical data needed to improve future decisioning.

Without decision traces, teams cannot answer basic questions. Why was this case rejected? Which rule caused the decline? Did the scorecard behave as expected? Did a later rule override an earlier one? A lending platform should answer those questions in seconds, not days.

Detailed records also matter after origination. Performance review, collections strategy, and portfolio monitoring all depend on knowing what the engine saw at the time of decision. If the lender cannot reconstruct the original rule path, post-book analysis gets weak fast.

For more on how traceability supports decision analysis, see Tracing Models and Decisions.

Use modern modeling methods where they add value

In consumer lending, simple rules are not enough. They work for clear policy checks, but they do not capture every pattern in portfolio risk. That is where scorecards, statistical models, and machine learning signals can help.

The right approach depends on the product. For products with flexible limits and rates, models can support risk-based pricing, credit line assignment, and routing to manual review. They help separate good, borderline, and high-risk applications more accurately than static rules alone.

That does not mean the engine should turn into a black box. The model output should be one input in a controlled decision flow, not the whole decision. Risk teams still need to see what the model returned, how it was combined with policy rules, and why the final outcome was chosen.

Scorecards still matter

Scorecards remain useful because they are stable, explainable, and easy to operationalize. They help lenders segment applications, set thresholds, and keep model behavior understandable for business and compliance teams.

In many lending setups, scorecards work best when combined with rule layers. The scorecard estimates risk. The rules enforce policy. The engine then decides whether to approve, decline, adjust terms, or send the case to manual review.

That structure keeps the process deterministic. It also makes the decision logic easier to test, monitor, and audit.

For a deeper view of scorecard deployment, read Implementing scorecards in rule engines.

Design the engine for manual review and automation

A lending decision engine should support both straight-through processing and human review. Not every application should be auto-approved or auto-declined. Some cases need a closer look.

The engine should route borderline cases based on clear criteria. That might include a thin credit file, conflicting data, or a score near the approval threshold. Manual review should be a defined path, not an exception handled outside the system.

This matters because manual review needs the same discipline as automation. Review teams should see the decision trace, the rules that fired, and the data used in the assessment. Without that, manual work becomes inconsistent and hard to manage.

Adaptability is part of risk control

A lending portfolio does not stay still. Economic conditions shift. Applicant quality changes. Fraud pressure rises. A good decision engine must adapt without losing control.

That is why rule management, model monitoring, and traceability need to work together. If performance weakens, teams should be able to see the signal, isolate the cause, and update the flow safely. If a rule stops working, it should be easy to disable. If a model drifts, the engine should make that visible.

This is especially important when lenders add new external data sources. Third-party signals can improve decisioning, but only if they are introduced with testing and clear precedence. External data should support the policy, not override governance.

For a practical view of rule behavior under change, see How to implement an automated decision strategy that keeps working under failure.

Where external data fits

External data can improve underwriting, fraud detection, and identity checks. But it should be used with discipline. If every application calls every provider, cost rises fast and latency follows.

A better design is staged evaluation. Start with internal rules. Use external data only when the case needs it. Then combine the result with the rest of the decision flow. This keeps the process efficient and makes the data strategy easier to audit.

Teams should also decide which external signals are useful for which part of the flow. Some data sources help with identity. Others help with risk. Others help with device or fraud patterns. The engine should apply each signal where it belongs.

For examples of integrating outside data into decisioning, see Integrating external data sources into Decisimo and Data sources you can use.

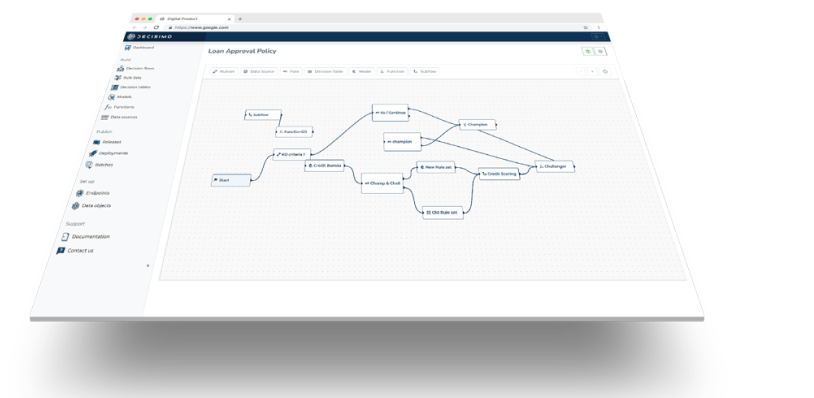

What good decision logic looks like in practice

A strong consumer lending decision flow usually follows a clear pattern:

- Check hard policy rules first.

- Reject obvious ineligible cases early.

- Run additional checks only when needed.

- Apply scorecards or models where they add value.

- Route ambiguous cases to manual review.

- Log every rule, input, and outcome.

- Use the history to improve the next version.

That structure keeps the system understandable. It also keeps the team in control. The engine becomes a governed process, not a pile of disconnected checks.

Common mistakes to avoid

Many lenders make the same mistakes when they deploy decision engines.

First, they put expensive checks too early. That wastes budget and slows the flow.

Second, they build rules that are hard to change. When policy shifts, the team then needs engineering support for basic updates.

Third, they do not log enough detail. When a decision is challenged, they cannot reconstruct what happened.

Fourth, they overuse models without enough governance. A model can help, but it still needs explicit policy around it.

Fifth, they forget the post-book view. A good approval decision is not enough if the engine cannot support later monitoring and collections strategy.

Conclusion

Risk management decision engines in consumer lending work best when they are ordered, flexible, and fully traceable. Start with internal rules. Use hard rejects for absolute risk. Add external data only when it adds value. Keep detailed records. Use scorecards and models where they improve accuracy.

The goal is not more complexity. The goal is better control. A good engine helps lenders make faster decisions, explain those decisions, and change policy without breaking the process. That is how decision logic stays useful as the market changes.

Automate smarter.